For a long time, a single-board ARM computer and a spare hard drive were all it took to call yourself a homelabber. Raspberry Pi made the whole thing approachable. Costs stayed low, power draw was negligible, and a thriving community meant answers were never far away.

But as home networks got faster, media libraries grew heavier, and self-hosting expanded beyond a single app into a whole ecosystem of services, the same hardware that once felt liberating started feeling like a ceiling. The conversation in homelab communities has quietly moved from "how do I set this up?" to "why won't this run properly?" That question, more often than not, points back to the architecture underneath.

Why ARM Still Dominates Entry-Level Homelabs in 2026

ARM-based single-board computers hold a real and legitimate place in the homelab landscape. The Raspberry Pi 5, running a quad-core Arm Cortex-A76 at 2.4GHz with up to 8GB of RAM, handles Pi-hole, lightweight Nextcloud instances, and basic home automation without complaint. Power consumption sits at roughly 3W at idle, which adds up to meaningful savings over a year of 24/7 operation.

The ecosystem built around these boards is genuinely impressive. Years of community guides, pre-built OS images, and active forums mean almost any problem has a documented solution. For someone just getting into self-hosting, or running a handful of low-demand containers, ARM remains a perfectly sensible entry point.

The Real Limits of Running ARM in Your Homelab

There is a specific frustration that ARM homelabbers tend to hit around the same point in their journey. The setup that ran smoothly with two or three Docker containers starts misbehaving once pushed harder. The root causes are worth understanding clearly, because they are architectural rather than incidental. Three distinct limitations keep surfacing across community discussions, and they each affect a different layer of the stack.

Software Compatibility

Many server applications distribute pre-compiled x86 binaries as their primary release format. ARM builds exist for popular tools like Plex, Jellyfin, and Nextcloud, but they often lag behind in features, receive slower security patches, or require additional compilation steps. Hypervisors like Proxmox VE and firewall distributions such as pfSense and OPNsense are built and optimized for x86-64. Running them on ARM involves workarounds that range from inconvenient to genuinely unstable.

Expansion and I/O

Most ARM single-board computers connect storage and peripherals over USB or a restricted PCIe interface. USB-attached storage works for light NAS duties, but under simultaneous read/write pressure from multiple clients, the throughput ceiling becomes apparent quickly. True PCIe expansion, the kind that accommodates NVMe drives, 10GbE network cards, or compute accelerators, is either absent or restricted to a single slow lane on most consumer ARM boards.

Sustained Load Behavior

ARM SoCs are designed with mobile and embedded workloads in mind, where burst performance matters more than continuous throughput. Running a transcoding job alongside several active containers and a scheduled backup creates the kind of steady-state load these chips were never optimized for. Thermal throttling under continuous workloads is a documented issue across multiple ARM board generations, and passive cooling only compounds the problem over time.

Why x86 Gives Your Homelab More Room to Grow

The shift to x86 in small form-factor server hardware is not a recent development, but the economics improved significantly with Intel's Alder Lake-N processor line. Chips like the N100 and N150 deliver quad-core performance at a rated TDP of 6W, making always-on deployment genuinely practical without a loud fan or a notable electricity cost. The advantages stack up across three areas that ARM consistently struggles with.

| Area | ARM Homelab | x86 Homelab |

| OS support | Custom images, limited distros | Any standard Linux distro, full Proxmox/TrueNAS support |

| Virtualization | Limited, no hardware VT support | KVM with Intel VT-x, near-native VM performance |

| Networking | Typically 1GbE max | Dual 2.5GbE standard, 10GbE via PCIe |

| PCIe expansion | Absent or restricted | Full x4 slot for NVMe, NICs, accelerators |

| Software ecosystem | ARM builds, often delayed | Native x86 binaries, no recompilation needed |

On the software side, any Linux distribution that runs on a standard laptop installs on x86 homelab hardware without modification. Proxmox VE deploys from the official ISO. TrueNAS SCALE runs exactly as documented. pfSense and OPNsense behave the way their wikis describe. The absence of architecture-specific friction means time is spent on actual configuration rather than compatibility debugging.

Virtualization tells a similar story. KVM, the kernel-based hypervisor native to Linux, runs with hardware-assisted virtualization through Intel VT-x extensions, allowing multiple isolated virtual machines to share one physical host with near-native performance. Running a dedicated VM for a media server, a separate one for a firewall, and a third for development work is a routine x86 homelab configuration. Attempting the same on ARM involves tradeoffs that erode the benefits quickly.

Is Your Homelab Outgrowing Its ARM Board?

For many homelabbers, the honest answer is yes. Once a setup moves beyond a couple of containers into real multi-service territory, ARM boards tend to show their limits in predictable ways. A few specific scenarios come up repeatedly in community discussions and are worth recognizing early.

Media server transcoding is the most common tipping point. Plex and Jellyfin both support hardware-accelerated transcoding on Intel processors through Quick Sync Video. On a modern Intel N-series chip, converting a 4K HEVC stream to H.264 for a client that cannot play it natively consumes a fraction of the CPU headroom that software transcoding demands. ARM boards either lack this acceleration pathway entirely or support it inconsistently, depending on the driver stack. For anyone targeting multiple simultaneous streams, x86 is the practical choice.

Game server hosting exposes similar gaps. Most community-run multiplayer game servers distribute binaries compiled primarily for x86, and running them on ARM often means relying on emulation through QEMU or unofficial community builds that may not stay current with upstream releases. Beyond compatibility, sustained single-threaded performance, which game server tick rates depend on heavily, favors modern x86 chips over ARM at comparable price points.

Multi-service virtualization is the third signal worth paying attention to. If the goal is a Proxmox node running isolated VMs for a NAS, a reverse proxy, a VPN endpoint, and a home automation hub simultaneously, ARM is the wrong foundation. The hypervisor ecosystem and hardware virtualization support on x86 are simply in a different class.

What to Look for in an x86 Homelab Board

Power draw matters for always-on hardware. Community benchmarks consistently show Intel N100 and N150 processors maintaining roughly 10-12W under mixed real-world workloads, including containers, light VMs, and media tasks running concurrently. That is competitive with ARM cluster setups attempting equivalent workloads, and it challenges the assumption that x86 automatically means higher energy costs.

PCIe expandability deserves careful attention. A PCIe 3.0 x4 slot opens the upgrade path to NVMe storage adapters, additional SATA controllers, 10GbE NICs, and low-power AI accelerator cards. Dual Ethernet is worth prioritizing for anyone planning network segmentation, a dedicated router VM, or multi-WAN setups. Onboard eMMC for the OS boot drive is a practical detail that keeps SATA and NVMe bandwidth fully available for storage workloads.

OS compatibility should be verified before committing to any board. Platforms built on standard Intel chipsets with mainline Linux kernel support tend to have the fewest surprises after deployment. Community activity around a specific board, forum threads, GitHub issues, and documented builds, is a reliable indicator of how well-supported the hardware actually is day-to-day.

| Factor | What to Prioritize |

| Processor | Intel N100 / N150 (Alder Lake-N) |

| Power draw | 10-12W under typical load |

| PCIe | x4 slot minimum for future expansion |

| Networking | Dual 2.5GbE or better |

| Storage I/O | Native SATA + NVMe support |

| OS support | Proxmox VE, TrueNAS, Debian, Ubuntu verified |

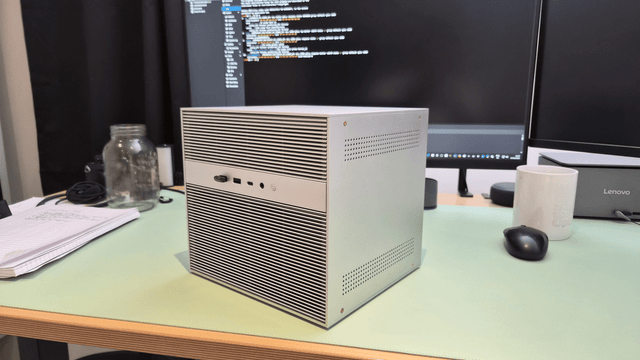

Build a Smarter Homelab Around a Single x86 Board

Most homelab setups do not start complex. They get there one device at a time, until the shelf holds three boards, two power bricks, and a management overhead nobody planned for. A single capable x86 board changes that dynamic entirely. Boards like ZimaBoard 2 consolidate NAS, virtualization, routing, and media serving into one fanless unit, cutting both physical clutter and ongoing maintenance. The upgrade from ARM is less a hardware swap and more a change in how much a homelab can realistically handle without fighting its own foundation.

FAQs

Q1: Does moving to an Intel N100-based x86 platform guarantee full AV1 hardware encoding?

Generally, no. While Alder Lake-N (N100/N150) offers excellent hardware decoding for AV1, hardware encoding typically requires higher-end Intel Arc or Core Ultra chips. For heavy re-encoding tasks in 2026, you’ll still rely on the x86 CPU's superior raw power or an external GPU, rather than a dedicated onboard encoding block.

Q2: Can I utilize ECC memory on entry-level x86 boards to prevent data corruption?

Typically not. Most consumer-grade N-series motherboards use non-ECC SODIMM or soldered LPDDR5 RAM. If your goal is enterprise-grade ZFS data integrity, you usually need to step up to Intel Atom C-series or Xeon-D platforms. ARM boards share this limitation, rarely offering ECC outside of expensive industrial-grade modules.

Q3: Is x86 inherently better for hosting local AI models like LLMs or Frigate?

It depends. x86 wins on flexibility; you can easily add a Coral TPU or an NVIDIA GPU via PCIe. However, by 2026, some high-end ARM SoCs feature specialized NPUs that outperform entry-level x86 chips in specific object detection tasks. x86 remains the "safe bet" for broad software library compatibility.

Q4: Can I "hot-swap" my existing ARM Docker containers directly onto an x86 host?

No. While your configuration files (YAML) and data volumes are portable, the underlying container images are architecture-specific. You must pull the amd64 version of each image. Fortunately, most modern registries use "multi-arch" tags, so a simple Docker Compose pull on your new x86 machine usually fetches the correct binary automatically.

Q5: Should I decommission my old ARM boards once the x86 server is live?

Not necessarily. The most efficient 2026 homelabs use a "hybrid" approach. Keep your ARM boards as lightweight edge nodes. They are perfect for low-power "witness" nodes in a Proxmox cluster (to maintain Quorum), dedicated Zigbee/Z-Wave gateways, or remote WireGuard endpoints that stay online even during main server maintenance.

Zima Campaign Hub

More to Read

ZimaCube Home Lab Monitoring Guide: From Uptime Kuma to AI Agents

Monitor your home server with Uptime Kuma, Pulse, Proxmox Data Center Manager, or an AI agent to track uptime, backups, VMs, alerts, and avoid...

From Sparcstation to ZimaBlade: A 57-Year-Old Geek’s Self-Hosting Journey

A French admin professional replaced his failed Raspberry Pi 4 with a ZimaBlade 7700, running Debian 13, XFS, and BorgBackup. Full backup server build...

ZimaCube vs DIY NAS: Which One Is Right for You?

Prebuilt NAS or DIY? We break down real costs, setup time, Thunderbolt 4, and maintenance differences to help you decide which build actually fits...